Hive 中的复合数据结构

原文:http://my.oschina.net/leejun2005/blog/120463

原作者:xrzs

学习,谢谢

目前 hive 支持的复合数据类型有以下几种:

map

(key1, value1, key2, value2, ...) Creates a map with the given key/value pairs

struct

(val1, val2, val3, ...) Creates a struct with the given field values. Struct field names will be col1, col2, ...

named_struct

(name1, val1, name2, val2, ...) Creates a struct with the given field names and values. (as of Hive 0.8.0)

array

(val1, val2, ...) Creates an array with the given elements

create_union

(tag, val1, val2, ...) Creates a union type with the value that is being pointed to by the tag parameter

一、map、struct、array 这3种的用法:

1、Array的使用

创建数据库表,以array作为数据类型

create table person(name string,work_locations array<string>)

ROW FORMAT DELIMITED

FIELDS TERMINATED BY '\t'

COLLECTION ITEMS TERMINATED BY ',';

数据

biansutao beijing,shanghai,tianjin,hangzhou

linan changchu,chengdu,wuhan

入库数据

LOAD DATA LOCAL INPATH '/home/hadoop/person.txt' OVERWRITE INTO TABLE person;

查询

hive> select * from person;

biansutao ["beijing","shanghai","tianjin","hangzhou"]

linan ["changchu","chengdu","wuhan"]

Time taken: 0.355 seconds

hive> select name from person;

linan

biansutao

Time taken: 12.397 seconds

hive> select work_locations[0] from person;

changchu

beijing

Time taken: 13.214 seconds

hive> select work_locations from person;

["changchu","chengdu","wuhan"]

["beijing","shanghai","tianjin","hangzhou"]

Time taken: 13.755 seconds

hive> select work_locations[3] from person;

NULL

hangzhou

Time taken: 12.722 seconds

hive> select work_locations[4] from person;

NULL

NULL

Time taken: 15.958 seconds2、Map 的使用

创建数据库表

create table score(name string, score map<string,int>)

ROW FORMAT DELIMITED

FIELDS TERMINATED BY '\t'

COLLECTION ITEMS TERMINATED BY ','

MAP KEYS TERMINATED BY ':';

要入库的数据

biansutao '数学':80,'语文':89,'英语':95

jobs '语文':60,'数学':80,'英语':99

入库数据

LOAD DATA LOCAL INPATH '/home/hadoop/score.txt' OVERWRITE INTO TABLE score;

查询

hive> select * from score;

biansutao {"数学":80,"语文":89,"英语":95}

jobs {"语文":60,"数学":80,"英语":99}

Time taken: 0.665 seconds

hive> select name from score;

jobs

biansutao

Time taken: 19.778 seconds

hive> select t.score from score t;

{"语文":60,"数学":80,"英语":99}

{"数学":80,"语文":89,"英语":95}

Time taken: 19.353 seconds

hive> select t.score['语文'] from score t;

60

89

Time taken: 13.054 seconds

hive> select t.score['英语'] from score t;

99

95

Time taken: 13.769 seconds3、Struct 的使用

创建数据表

CREATE TABLE test(id int,course struct<course:string,score:int>)

ROW FORMAT DELIMITED

FIELDS TERMINATED BY '\t'

COLLECTION ITEMS TERMINATED BY ',';

数据

1 english,80

2 math,89

3 chinese,95

入库

LOAD DATA LOCAL INPATH '/home/hadoop/test.txt' OVERWRITE INTO TABLE test;

查询

hive> select * from test;

OK

1 {"course":"english","score":80}

2 {"course":"math","score":89}

3 {"course":"chinese","score":95}

Time taken: 0.275 seconds

hive> select course from test;

{"course":"english","score":80}

{"course":"math","score":89}

{"course":"chinese","score":95}

Time taken: 44.968 seconds

select t.course.course from test t;

english

math

chinese

Time taken: 15.827 seconds

hive> select t.course.score from test t;

80

89

95

Time taken: 13.235 seconds4、数据组合 (不支持组合的复杂数据类型)

LOAD DATA LOCAL INPATH '/home/hadoop/test.txt' OVERWRITE INTO TABLE test;

create table test1(id int,a MAP<STRING,ARRAY<STRING>>)

row format delimited fields terminated by '\t'

collection items terminated by ','

MAP KEYS TERMINATED BY ':';

1 english:80,90,70

2 math:89,78,86

3 chinese:99,100,82

LOAD DATA LOCAL INPATH '/home/hadoop/test1.txt' OVERWRITE INTO TABLE test1;二、hive中的一些不常见函数的用法:

常见的函数就不废话了,和标准sql类似,下面我们要聊到的基本是HQL里面专有的函数,

hive里面的函数大致分为如下几种:Built-in、Misc.、UDF、UDTF、UDAF

我们就挑几个标准SQL里没有,但是在HIVE SQL在做统计分析常用到的来说吧。

1、array_contains (Collection Functions)

这是内置的对集合进行操作的函数,用法举例:

create EXTERNAL table IF NOT EXISTS userInfo (id int,sex string, age int, name string, email string,sd string, ed string) ROW FORMAT DELIMITED FIELDS TERMINATED BY '\t' location '/hive/dw';select * from userinfo where sex='male' and (id!=1 and id !=2 and id!=3 and id!=4 and id!=5) and age < 30;

select * from (select * from userinfo where sex='male' and !array_contains(split('1,2,3,4,5',','),cast(id as string))) tb1 where tb1.age < 30;其中建表所用的测试数据你可以用如下链接的脚本自动生成:

http://my.oschina.net/leejun2005/blog/76631

2、get_json_object (Misc. Functions)

测试数据:

first {"store":{"fruit":[{"weight":8,"type":"apple"},{"weight":9,"type":"pear"}],"bicycle":{"price":19.951,"color":"red1"}},"email":"amy@only_for_json_udf_test.net","owner":"amy1"} third

first {"store":{"fruit":[{"weight":9,"type":"apple"},{"weight":91,"type":"pear"}],"bicycle":{"price":19.952,"color":"red2"}},"email":"amy@only_for_json_udf_test.net","owner":"amy2"} third

first {"store":{"fruit":[{"weight":10,"type":"apple"},{"weight":911,"type":"pear"}],"bicycle":{"price":19.953,"color":"red3"}},"email":"amy@only_for_json_udf_test.net","owner":"amy3"} third

create external table if not exists t_json(f1 string, f2 string, f3 string) row format delimited fields TERMINATED BY ' ' location '/test/json'

select get_json_object(t_json.f2, '$.owner') from t_json;

SELECT * from t_json where get_json_object(t_json.f2, '$.store.fruit[0].weight') = 9;

SELECT get_json_object(t_json.f2, '$.non_exist_key') FROM t_json;这里尤其要注意UDTF的问题,官方文档有说明:

json_tuple

A new json_tuple() UDTF is introduced in hive 0.7. It takes a set of names (keys) and a JSON string, and returns a tuple of values using one function. This is much more efficient than calling GET_JSON_OBJECT to retrieve more than one key from a single JSON string. In any case where a single JSON string would be parsed more than once, your query will be more efficient if you parse it once, which is what JSON_TUPLE is for. As JSON_TUPLE is a UDTF, you will need to use the LATERAL VIEW syntax in order to achieve the same goal.

For example,

select a.timestamp, get_json_object(a.appevents, '$.eventid'), get_json_object(a.appenvets, '$.eventname') from log a;should be changed to

select a.timestamp, b.*

from log a lateral view json_tuple(a.appevent, 'eventid', 'eventname') b as f1, f2;UDTF(User-Defined Table-Generating Functions) 用来解决 输入一行输出多行(On-to-many maping) 的需求。

通过Lateral view可以方便的将UDTF得到的行转列的结果集合在一起提供服务,因为直接在SELECT使用UDTF会存在限制,即仅仅能包含单个字段,不光是多个UDTF,仅仅单个UDTF加上其他字段也是不可以,hive提示在UDTF中仅仅能有单一的表达式。如下:

hive> select my_test(“abcef:aa”) as qq,’abcd’ from sunwg01;

FAILED: Error in semantic analysis: Only a single expression in the SELECT clause is supported with UDTF’s

使用Lateral view可以实现上面的需求,Lateral view语法如下:

lateralView: LATERAL VIEW udtf(expression) tableAlias AS columnAlias (‘,’ columnAlias)*

fromClause: FROM baseTable (lateralView)*

hive> create table sunwg ( a array, b array )

> ROW FORMAT DELIMITED

> FIELDS TERMINATED BY ‘\t’

> COLLECTION ITEMS TERMINATED BY ‘,’;

OK

Time taken: 1.145 seconds

hive> load data local inpath ‘/home/hjl/sunwg/sunwg.txt’ overwrite into table sunwg;

Copying data from file:/home/hjl/sunwg/sunwg.txt

Loading data to table sunwg

OK

Time taken: 0.162 seconds

hive> select * from sunwg;

OK

[10,11] ["tom","mary"]

[20,21] ["kate","tim"]

Time taken: 0.069 seconds

hive>

> SELECT a, name

> FROM sunwg LATERAL VIEW explode(b) r1 AS name;

OK

[10,11] tom

[10,11] mary

[20,21] kate

[20,21] tim

Time taken: 8.497 seconds

hive> SELECT id, name

> FROM sunwg LATERAL VIEW explode(a) r1 AS id

> LATERAL VIEW explode(b) r2 AS name;

OK

10 tom

10 mary

11 tom

11 mary

20 kate

20 tim

21 kate

21 tim

Time taken: 9.687 seconds

3、parse_url_tuple

测试数据:

url1 http://facebook.com/path1/p.php?k1=v1&k2=v2#Ref1

url2 https://cwiki.apache.org/confluence/display/Hive/LanguageManual+UDF#LanguageManualUDF-getjsonobject

url3 https://www.google.com.hk/#hl=zh-CN&newwindow=1&safe=strict&q=hive+translate+example&oq=hive+translate+example&gs_l=serp.3...10174.11861.6.12051.8.8.0.0.0.0.132.883.0j7.7.0...0.0...1c.1j4.8.serp.0B9C1T_n0Hs&bav=on.2,or.&bvm=bv.44770516,d.aGc&fp=e13e41a6b9dab3f6&biw=1241&bih=589

create external table if not exists t_url(f1 string, f2 string) row format delimited fields TERMINATED BY ' ' location '/test/url';

SELECT f1, b.* FROM t_url LATERAL VIEW parse_url_tuple(f2, 'HOST', 'PATH', 'QUERY', 'QUERY:k1') b as host, path, query, query_id;结果:

url1 facebook.com /path1/p.php k1=v1&k2=v2 v1

url2 cwiki.apache.org /confluence/display/Hive/LanguageManual+UDF NULL NULL

url3 www.google.com.hk / NULL NULL

4、explode

explode 是一个 hive 内置的表生成函数:Built-in Table-Generating Functions (UDTF),主要是解决 1 to N 的问题,即它可以把一行输入拆成多行,比如一个 array 的每个元素拆成一行,作为一个虚表输出。它有如下需要注意的地方:

Using the syntax "SELECT udtf(col) AS colAlias..." has a few limitations:

No other expressions are allowed in SELECT

SELECT pageid, explode(adid_list) AS myCol... is not supported

UDTF's can't be nested

SELECT explode(explode(adid_list)) AS myCol... is not supported

GROUP BY / CLUSTER BY / DISTRIBUTE BY / SORT BY is not supported

SELECT explode(adid_list) AS myCol ... GROUP BY myCol is not supported从上面的原理与语法上可知,

select 列中不能 udtf 和其它非 udtf 列混用,

udtf 不能嵌套,

不支持 GROUP BY / CLUSTER BY / DISTRIBUTE BY / SORT BY

还有 select 中出现的 udtf 一定需要列别名,否则会报错:

SELECT explode(myCol) AS myNewCol FROM myTable;

SELECT explode(myMap) AS (myMapKey, myMapValue) FROM myMapTable;

SELECT posexplode(myCol) AS pos, myNewCol FROM myTable;5、lateral view

lateral view 是Hive中提供给UDTF的conjunction,它可以解决UDTF不能添加额外的select列的问题。当我们想对hive表中某一列进行split之后,想对其转换成1 to N的模式,即一行转多列。hive不允许我们在UDTF函数之外,再添加其它select语句。

如下,我们想将登录某个游戏的用户id放在一个字段user_ids里,对每一行数据用UDTF后输出多行。

select game_id, explode(split(user_ids,'\\[\\[\\[')) as user_id from login_game_log where dt='2014-05-15' ;

FAILED: Error in semantic analysis: UDTF's are not supported outside the SELECT clause, nor nested in expressions。提示语法分析错误,UDTF不支持函数之外的select 语句,如果我们想支持怎么办呢?接下来就是Lateral View 登场的时候了。

Lateral view 其实就是用来和像类似explode这种UDTF函数联用的。lateral view 会将UDTF生成的结果放到一个虚拟表中,然后这个虚拟表(1 to N)会和输入行即每个game_id进行join 来达到连接UDTF外的select字段的目的(源表和拆分的虚表按行做行内 1 join N 的直接连接),这也是为什么 LATERAL VIEW udtf(expression) 后面需要表别名和列别名的原因。

Lateral View Syntax

lateralView: LATERAL VIEW udtf(expression) tableAlias AS columnAlias (',' columnAlias)*

fromClause: FROM baseTable (lateralView)*

可以看出,可以在2个地方用Lateral view:

在udtf前面用

在from baseTable后面用

例如:

pageid adid_list

front_page [1, 2, 3]

contact_page [3, 4, 5]

SELECT pageid, adid

FROM pageAds LATERAL VIEW explode(adid_list) adTable AS adid;pageid adid

front_page 1

front_page 2

front_page 3

contact_page 3

contact_page 4

contact_page 5

From语句后可以跟多个Lateral View。

A FROM clause can have multiple LATERAL VIEW clauses. Subsequent LATERAL VIEWS can reference columns from any of the tables appearing to the left of the LATERAL VIEW.

给定数据:

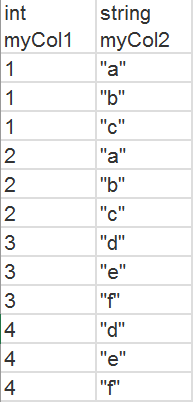

Array<int> col1 Array<string> col2

[1, 2] [a", "b", "c"]

[3, 4] [d", "e", "f"]

转换目标:

想同时把第一列和第二列拆开,类似做笛卡尔乘积。

我们可以这样写:

SELECT myCol1, myCol2 FROM baseTable

LATERAL VIEW explode(col1) myTable1 AS myCol1

LATERAL VIEW explode(col2) myTable2 AS myCol2;还有一种情况,如果UDTF转换的Array是空的怎么办呢?

在Hive0.12里面会支持outer关键字,如果UDTF的结果是空,默认会被忽略输出。

如果加上outer关键字,则会像left outer join 一样,还是会输出select出的列,而UDTF的输出结果是NULL。

总结:

Lateral View通常和UDTF一起出现,为了解决UDTF不允许在select字段的问题。

Multiple Lateral View可以实现类似笛卡尔乘积。

Outer关键字可以把不输出的UDTF的空结果,输出成NULL,防止丢失数据。

三、ref:

http://blog.csdn.net/wf1982/article/details/7474601

http://www.cnblogs.com/ggjucheng/archive/2013/01/08/2850797.html

http://www.oratea.net/?p=650

https://cwiki.apache.org/confluence/display/Hive/LanguageManual+UDF#LanguageManualUDF-parseurltuple

https://cwiki.apache.org/confluence/display/Hive/Tutorial

http://blog.csdn.net/inte_sleeper/article/details/7196114 hive lateral view语句:列拆分成行

https://cwiki.apache.org/confluence/display/Hive/LanguageManual+UDF#LanguageManualUDF-explode

http://blog.csdn.net/oopsoom/article/details/26001307 Lateral View用法 与 Hive UDTF explode

Hive 中的复合数据结构相关推荐

- Hive 中的复合数据结构简介以及一些函数的用法说明

目前 hive 支持的复合数据类型有以下几种: map (key1, value1, key2, value2, ...) Creates a map with the given key/value ...

- PL/SQL七复合数据结构

PL/SQL有两种复合数据结构:记录和集合.记录由不同的域组成,集合由不同的元素组成. 记录: 记录是PL/SQL的一种复合数据结构,scalar数据类型和其他数据类型只是简单的在包一级进行预定义,但 ...

- Hive中Join的 MR 底层原理

Hive中的Join可分为Common Join(Reduce阶段完成join)和Map Join(Map阶段完成join) 一. Hive Common Join 如果不指定MapJoin或者不符合 ...

- Hive中数据库和表的操作(HSQL)

数仓管理工具Hive可以将HDFS文件中的结构化数据映射成表, 利用HSQL对表进行分析,HSQL的底层运行机制,默认是MapReduce计算,也可以替换成Spark.Tez.Flink 计算结果存储 ...

- hive中字段长度函数_Hive常用函数总结

hive函数的分类 hive的客户端 显示当前会话有多少函数可用 SHOW FUNCTIONS; 如: hive> show functions ; OK ! != % 显示函数的描述信息 DE ...

- hive中order by,sort by, distribute by, cluster by作用以及用法

1. order by Hive中的order by跟传统的sql语言中的order by作用是一样的,会对查询的结果做一次全局排序,所以说,只有hive的sql中制定了order by所有的数据都会 ...

- 在Hive中执行DDL之类的SQL语句时遇到的一个问题

在Hive中执行DDL之类的SQL语句时遇到的一个问题 作者:天齐 遇到的问题如下: hive> create table ehr_base(id string); FAILED: Execut ...

- hive处理json数据_(转)hive中解析json数组

hive中解析一般的json是很容易的,get_json_object就可以了. 但如果字段是json数组,比如 [{"bssid":"6C:59:40:21:05:C4 ...

- hive中时间操作(一)

转:https://blog.csdn.net/u012474716/article/details/78925319/ hive中常用的时间为时间戳和日期格式之间的转换 常用的函数为: to_dat ...

最新文章

- Nature: 海洋病毒对环境基因组和潜在的生物地球化学影响

- POJ 3855 计算几何·多边形重心

- 异常分发(用户异常)

- resin常见有关问题

- [2017.11.11特辑]以一个光棍节表白案例浅谈ECMAScript6模块化的使用方法

- Spring结合Quartz实现多任务定时调用(转载)

- request获取各种路径记录

- Python 常用命令

- 如何git-cherry-pick仅更改某些文件?

- 苹果mac高效先进的网页开发工具:Espresso

- networkxpdf_1 NetworkX概述

- PHP做好防盗链的基本思想 防盗链的设置方法

- 翻译: 自动驾驶汽车技术:自动驾驶汽车如何工作?

- mybatis源码解析

- 虚拟电厂 3D 可视化,节能减排绿色发展

- CATIA V5-6 R2017 支架类零件设计

- ‘pom.xml‘ has syntax errors

- html 图片自动滚动播放,CSS3如何实现图片滚动播放效果(附代码)_WEB前端开发...

- .NET 程序员有家了,微软推出官方技术社区论坛

- 21天精通python电子版_小白21天精通Python是如何做到的?